Wednesday, April 24, 2024 | | 6:30pm | - | 9:00pm | Open Skating Cheap-Skate Night | $9.50* |

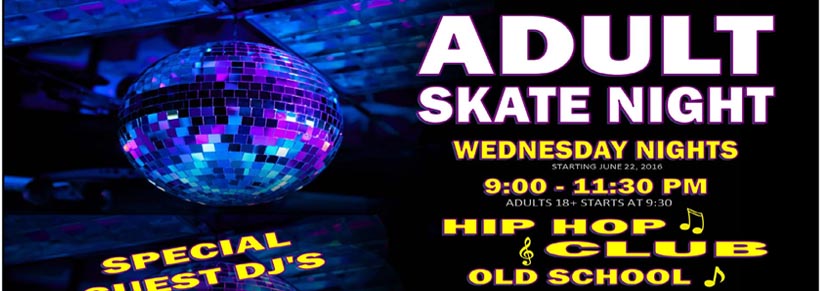

| 9:00pm | - | 11:30pm | Adult Skate Night (18 & Over) | $14.00 |

|

|

|

Thursday, April 25, 2024 | | Available for Private Parties |

|

|

|

Friday, April 26, 2024 | | 3:30pm | - | 5:30pm | After School Skate (Open Skating) | 9.50* |

| 7:00pm | - | 9:30pm | Open Public Skating | 12.50* |

| Double overlapping session. Skate both sessions for an additional $4.00. |

|

| 9:00pm | - | 11:30pm | Open Public Skating | 12.50* |

|

|

|

Saturday, April 27, 2024 | | 11:15am | - | 1:00pm | Jr. Session Open Skating 10 yo & Younger & Parents) | 9.50* |

| This is an open public skate for kids 10 years old and younger and their parents. |

|

| 1:00pm | - | 3:30pm | Open Public Skating | 12.50* |

| Double overlapping session. Skate both sessions for an additional $4.00. |

|

| 2:30pm | - | 5:00pm | Open Public Skating | 12.50* |

| 7:00pm | - | 9:30pm | Open Public Skating | 12.50* |

| Double overlapping session. Skate both sessions for an additional $4.00. |

|

| 9:00pm | - | 11:30pm | Open Public Skating | 12.50* |

|

|

|

Sunday, April 28, 2024 | | 1:00pm | - | 3:30pm | Open Public Skating | 12.50* |

| Double overlapping session. Skate both sessions for an additional $4.00. |

|

| 2:30pm | - | 5:00pm | Open Public Skating | 12.50* |

| 6:30pm | - | 9:30pm | Family Night Open Public Skating | 32.00* |

| Family Rate Available $31.00 plus skate rental. Includes up to 5 skaters. Single admission $12.00 plus skate rental. |

|

|

|

|

Monday, April 29, 2024 | | 6:30pm | - | 9:30pm | Open Public Skating | $12.50* |

|

|

|

Tuesday, April 30, 2024 | | Available for Private Parties |

|

|

|

Wednesday, May 1, 2024 | | 6:30pm | - | 9:00pm | Open Skating Cheap-Skate Night | $9.50* |

| 9:00pm | - | 11:30pm | Adult Skate Night (18 & Over) | $14.00 |

|

|

|

Thursday, May 2, 2024 | | Available for Private Parties |

|

|

|

Friday, May 3, 2024 | | 3:30pm | - | 5:30pm | After School Skate (Open Skating) | 9.50* |

| 7:00pm | - | 9:30pm | Open Public Skating | 12.50* |

| Double overlapping session. Skate both sessions for an additional $4.00. |

|

| 9:00pm | - | 11:30pm | Open Public Skating | 12.50* |

|

|

|

Saturday, May 4, 2024 | | 11:15am | - | 1:00pm | Jr. Session Open Skating 10 yo & Younger & Parents) | 9.50* |

| This is an open public skate for kids 10 years old and younger and their parents. |

|

| 1:00pm | - | 3:30pm | Open Public Skating | 12.50* |

| Double overlapping session. Skate both sessions for an additional $4.00. |

|

| 2:30pm | - | 5:00pm | Open Public Skating | 12.50* |

|

|

|

Sunday, May 5, 2024 | | 1:00pm | - | 3:30pm | Open Public Skating | 12.50* |

| Double overlapping session. Skate both sessions for an additional $4.00. |

|

| 2:30pm | - | 5:00pm | Open Public Skating | 12.50* |

| 6:30pm | - | 9:30pm | Family Night Open Public Skating | 32.00* |

| Family Rate Available $31.00 plus skate rental. Includes up to 5 skaters. Single admission $12.00 plus skate rental. |

|

|

|

|

Monday, May 6, 2024 | | 6:30pm | - | 9:30pm | Open Public Skating | $12.50* |

|

|

|

Tuesday, May 7, 2024 | | Available for Private Parties |

|

|

|

Quad Skate Rental . . . . . $3.50 Extra Per Person

Inline Skate Rental . . . . . . .$4.50 Extra Per Person

Stay Over to Next Overlapping Session . . . $4.00